Dimension

The AI coworker that never sleeps

Benchmark Results

Evaluated Apr 11, 2026·v1.3.0open_in_new·Automation

Composite

Very GoodUniversal

Score

Domain

Score

Formula

Universal = (29/30) × 100 = 86.7

Composite = (86.7 × 0.40) + (86 × 0.60)

= 86.3/100

−10 penalty applied to all scores

−10 repeatability adjustment applied to all scores. Repeatability testing was not conducted for this benchmark run. Raw scores: Universal 96.7, Domain 96.0, Composite 96.3. Scores without repeatability testing carry higher uncertainty.

Summary

Dimension is an exceptionally capable automation and workflow agent that excels at natural language workflow creation, cross-integration data orchestration, and error handling. It correctly created, modified, and managed workflows using Gmail and Google Calendar integrations, demonstrated strong conditional logic handling, and showed excellent judgment when encountering contradictory instructions or missing integrations. The agent proactively searched multiple data sources, provided production-ready output with clear formatting, and offered helpful follow-up suggestions. Minor gaps include slightly outdated pricing information sourced from web search rather than the live platform data, and the cross-integration test was limited by weekend timing (no calendar events). Overall, Dimension delivers a polished, highly autonomous experience for productivity automation.

Playing at 2× speed · Click video to pause/play

open_in_newFull sizeUniversal Performance

Six capabilities · Raw: 29/30

Completed multi-step meeting preparation task fully: checked calendar and email for 3 meetings, created detailed prep summaries with pre-meeting actions, and produced a prioritized weekly action plan with a suggested daily flow.

Perfectly understood both vague requests (organize meeting info) and precise instructions (specific workflow schedules, conditional logic). Adapted timezone automatically to user's Manila time.

Successfully chained 4+ dependent steps in the universal task: retrieve time, check calendar, search Gmail (multiple queries with retry on broader terms), compile structured output with priorities. Single-message scope.

Identified all three issues in the deliberately broken workflow request: conflicting triggers (event vs schedule), contradictory actions (send and delete same email), and missing integrations (Slack and Salesforce not connected). Provided concrete alternative workflows.

Zero interventions needed across all tests. Agent autonomously searched multiple sources, retried with broader search terms when initial queries returned no results, and proactively offered next steps.

Output is production-ready with well-structured formatting (tables, headers, bullet points, checkboxes). Minor deduction: hallucination test response showed pricing data (Free 0/50K credits, Trial 4) that differed from what is visible in the platform settings (Premium 9/mo, Pro 49/mo, Max 199/mo), suggesting the web search returned slightly outdated or different data.

Domain Scenarios

Automation · 5 scenarios scored 0–100

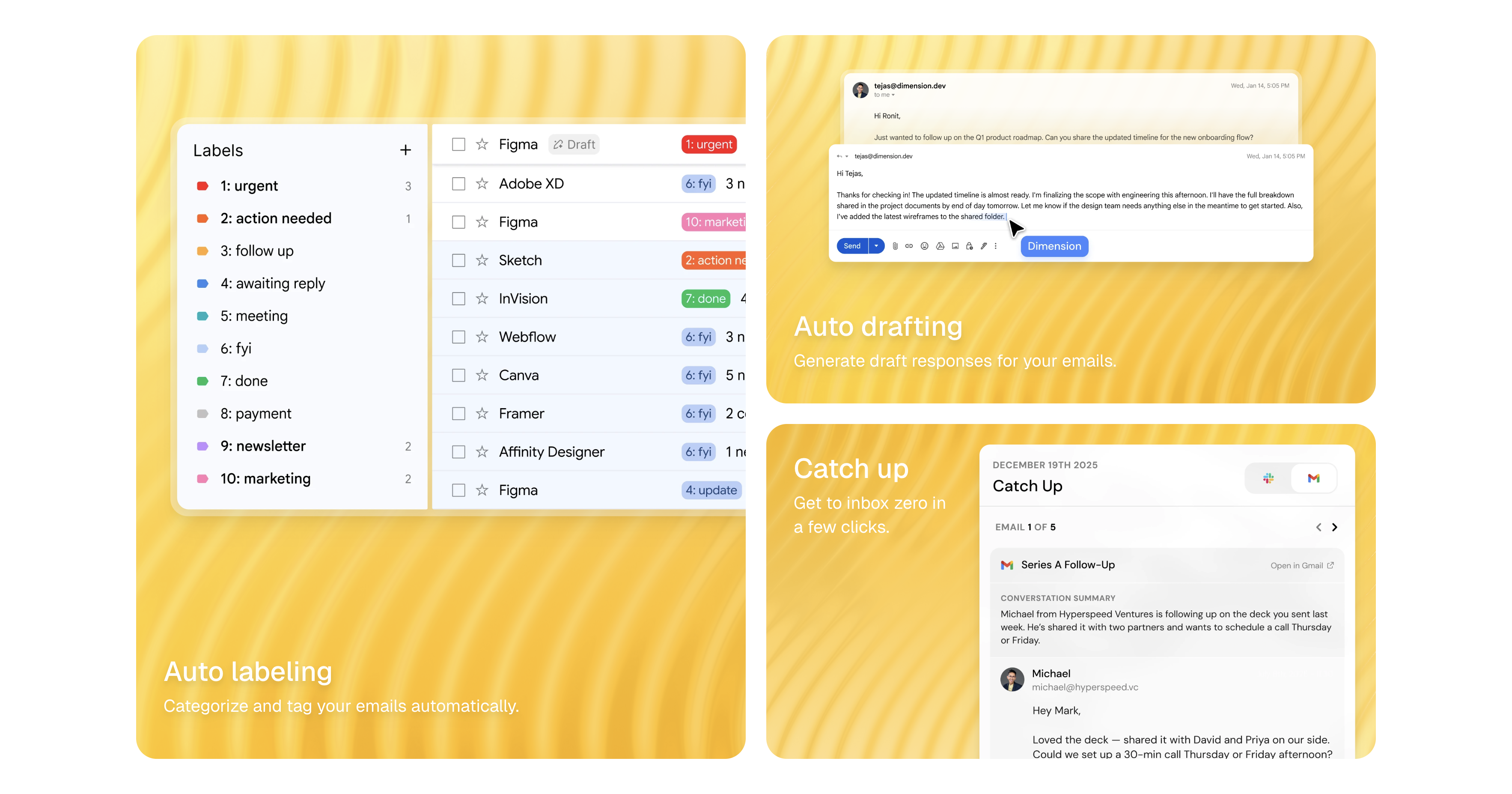

Created Weekly Invoice Email Digest workflow from natural language. Correctly set schedule (Monday 8am Manila time), search criteria (invoice in subject, past 7 days), and email delivery. Added graceful no results handling. Provided View Workflow link for review.

Created Daily Calendar Briefing with if/else logic: 3+ meetings triggers Busy Day Ahead email with meeting list; fewer than 3 triggers Light Day email with focus time suggestions. Correctly set weekday-only schedule at 7am Manila. Also loaded email tone skill for personalized style.

Agent correctly queried Google Calendar and searched Gmail for related email threads from meeting attendees. Accurately reported no weekend meetings. Offered to check alternative date ranges. Completeness reduced because the weekend timing meant no meetings existed to demonstrate the full Calendar to Gmail data pass-through and meeting brief generation.

Correctly identified Google Sheets is not connected when asked to pull data from a spreadsheet. Provided two clear workarounds: connect Google Sheets via integration settings link, or user provides the number manually and agent drafts the email immediately.

Found existing Weekly Invoice Email Digest by name, displayed current state (RRULE schedule, prompt), identified the most efficient approach (delete and recreate since schedule requires recreation), applied all requested changes plus an intelligent additional change (updating the email subject to reflect broader scope).

thumb_upStrengths

Dimension demonstrates exceptional workflow creation from natural language, with the ability to directly create, modify, and delete workflows within the platform rather than just describing them. The agent excels at cross-integration orchestration between Gmail and Google Calendar, automatically handles timezone conversions, and loads relevant skills (email tone) to personalize output. Error handling is outstanding: the agent clearly identifies conflicting requirements, missing integrations, and logical contradictions without attempting to build broken workflows. Output formatting is production-ready with tables, headers, and structured action items. The agent shows strong proactive behavior by retrying searches with broader terms, suggesting calendar event creation, and updating email subjects to match expanded workflow scope.

thumb_downWeaknesses

Minor pricing data discrepancy in the hallucination test: the agent web search returned different pricing tiers than what is visible in the platform settings page, suggesting the search index may be outdated. The cross-integration data flow test (D3) was limited by weekend timing, preventing full demonstration of Calendar to Gmail attendee lookup. The workflow modification approach (delete and recreate) rather than in-place editing suggests some API limitations, though the agent handled this transparently. No ability to connect new integrations on behalf of users, which is appropriately flagged but limits autonomous workflow setup.

warningTesting Limitations

Testing conducted on Free tier account which may have credit or feature limitations compared to paid tiers. Only Gmail and Google Calendar integrations were connected, limiting cross-integration testing scope. The D3 test was conducted on a Saturday when no calendar events existed, preventing full end-to-end demonstration of multi-integration data passing. Workflow execution was not observed (only creation and modification), as scheduled workflows had not run yet. Long-term reliability, workflow execution accuracy, and scale testing were not assessed.

Evaluation Transparency

Platform: Dimension, Free tier (with Gmail and Google Calendar integrations connected)

Environment: Free tier account. Connected integrations: Google Calendar, Gmail. 3 Skills configured: Business & Portfolio Intel, Email Tone, Email Tone (wisertechph@gmail.com). 2 pre-existing inactive workflows. Background agents enabled: Action Items, Evening Recap, Morning Briefing, Email Auto-Drafting, Meeting Prep. Email Tagging disabled. Auto Approve disabled. Model: Dimension (default).

- Testing conducted via browser-based interaction in a single session

- Repeatability testing: not conducted

- Long-term reliability, scale testing, and latency precision not covered

- Scores reflect a snapshot as of 2026-04-11

- Platform observed: Dimension, Free tier with Gmail and Google Calendar connected

- D3 cross-integration test limited by weekend timing (no calendar events available)

- Workflow execution not observed - only creation and modification tested

Discussion

0 comments